Glossary term: Apparent Magnitude

Description: Apparent magnitude is a measure of how bright a celestial body appears to an observer. For historical reasons, the magnitude scale assigns larger numbers to fainter objects. Magnitude is a logarithmic scale with a difference of five magnitudes corresponding to a factor of 100 in measured brightness. There are many magnitude scales because brightness can be measured at different wavelengths and with different techniques. The common "visual magnitude" scale is set so that the bright star Vega has an apparent magnitude of zero. On this scale, Sirius, the brightest star in the night sky, has magnitude -1.46, and the magnitudes of the Sun and the full Moon are -26.7 and -12.7, respectively. The negative numbers indicate that these objects appear brighter than Vega. In very dark conditions, people with excellent vision can see stars up to about visual magnitude 6. The Hubble Ultra Deep Field reaches a visual magnitude near 31. This is about 100 to the power five or 10,000,000,000 times fainter than magnitude 6.

Related Terms:

See this term in other languages

Term and definition status: This term and its definition have been approved by a research astronomer and a teacher

The OAE Multilingual Glossary is a project of the IAU Office of Astronomy for Education (OAE) in collaboration with the IAU Office of Astronomy Outreach (OAO). The terms and definitions were chosen, written and reviewed by a collective effort from the OAE, the OAE Centers and Nodes, the OAE National Astronomy Education Coordinators (NAECs) and other volunteers. You can find a full list of credits here. All glossary terms and their definitions are released under a Creative Commons CC BY-4.0 license and should be credited to "IAU OAE".

If you notice a factual error in this glossary definition then please get in touch.

In Other Languages

- Arabic: القدرالظاهري

- German: Scheinbare Helligkeit

- Spanish: Magnitud Aparente

- French: Magnitude apparente

- Italian: Magnitudine apparente

- Japanese: 見かけの等級 (external link)

- Brazilian Portuguese: Magnitude aparente

- Simplified Chinese: 视星等

- Traditional Chinese: 視星等

Related Diagrams

Andromeda Constellation Map

Caption: The constellation Andromeda showing the bright stars and surrounding constellations. Andromeda is surrounded by (going clockwise from the top) Cassiopeia, Lacerta, Pegasus, Pisces, Aries, Triangulum and Perseus. The brightest star in Andromeda (Alpheratz) is in the lower part of the constellation. Together with three stars in Pegasus it forms the asterism known as the "Great Square of Pegasus". The next two bright stars in the constellation (Mirach and Almach) form a line extending north-east from Alpheratz.

Andromeda is a northern constellation and is most visible in the evenings in the Northern Hemisphere autumn. It is visible from all of the Northern Hemisphere and most temperate regions of the Southern Hemisphere but is not visible from Antarctic and Subantarctic regions.

The most famous object in Andromeda, the Andromeda Galaxy is marked here with a red ellipse and its Messier catalog number M31.

The yellow circle on the left marks the position of the open cluster NGC 752 and the green circle on the right marks NGC 7662 (the blue snowball nebula), a planetary nebula.

The y-axis of this diagram is in degrees of declination with north as up and the x-axis is in hours of right ascension with east to the left. The sizes of the stars marked here relate to the star's apparent magnitude, a measure of its apparent brightness. The larger dots represent brighter stars. The Greek letters mark the brightest stars in the constellation. These are ranked by brightness with the brightest star being labeled alpha, the second brightest beta, etc., although this ordering is not always followed exactly. The dotted boundary lines mark the IAU's boundaries of the constellations and the solid green lines mark one of the common forms used to represent the figures of the constellations. Neither the constellation boundaries, nor the lines joining the stars appear on the sky.

Credit: Adapted by the IAU Office of Astronomy for Education from the original by IAU/Sky & Telescope

License: CC-BY-4.0 Creative Commons Attribution 4.0 International (CC BY 4.0) icons

Crux Constellation Map

Caption: The constellation Crux (commonly known as the Southern Cross or Crux Australis) showing its bright stars and surrounding constellations. The Southern Cross is surrounding by (going clockwise from the top) Centaurus, Carina and Musca. The brightest star is alpha Crucis which appears at the bottom of the constellation's famous kite shape. The Southern Cross is visible from southern and equatorial regions of the world. In more southerly parts of the world it is circumpolar so is always above the horizon. In other parts of the southern hemisphere and in equatorial regions it is most visible in the evenings in the southern hemisphere autumn.

The yellow circles show the locations of two open clusters, NGC 4755 (known as the Jewel Box) and NGC 4609.

The line joining gamma and alpha Crucis (the third and first brightest stars in the Southern Cross) points in the approximate direction of the South Celestial Pole. This has led to the Southern Cross playing an important role in celestial navigation, allowing navigators from different astronomical traditions to find their bearings.

The y-axis of this diagram is in degrees of declination with north as up and the x-axis is in hours of right ascension with east to the left. The sizes of the stars marked here relate to the star's apparent magnitude, a measure of its apparent brightness. The larger dots represent brighter stars. The Greek letters mark the brightest stars in the constellation. These are ranked by brightness with the brightest star being labeled alpha, the second brightest beta, etc., although this ordering is not always followed exactly. The dotted boundary lines mark the IAU's boundaries of the constellations and the solid green lines mark one of the common forms used to represent the figures of the constellations. Neither the constellation boundaries, nor the lines joining the stars appear on the sky.

Credit: Adapted by the IAU Office of Astronomy for Education from the original by IAU/Sky & Telescope.

License: CC-BY-4.0 Creative Commons Attribution 4.0 International (CC BY 4.0) icons

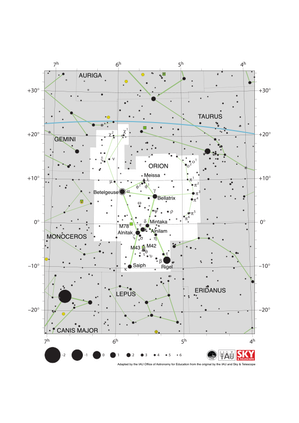

Orion Constellation Map

Caption: The constellation Orion along with its bright stars and surrounding constellations. Orion is surrounded by (going clockwise from the top) Taurus, Eridanus, Lepus, Monoceros and Gemini. Orion’s brightest stars Betelgeuse and Rigel appear at the northern (upper on this diagram) and southern (lower) end of the constellation respectively with the famous three star “belt” in the middle.

Orion spans the celestial equator and is thus visible at some time in the year from all of planet Earth. In the most arctic or antarctic regions of the world, some parts of the constellation may not be visible. Orion is most visible in the evenings in the northern hemisphere winter and southern hemisphere summer. The blue line above Orion marks the ecliptic, the path the Sun appears to travel across the sky over the course of a year. The Sun never passes through Orion, but one can occasionally find the other planets of the Solar System and the Moon in Orion.

Just south of Orion’s belt lie two Messier objects M42 (the Orion nebula) and M43, marked by green squares. These nebulae along with M78 (here the green square to the left of the belt) are part of the huge Orion Molecular Cloud Complex. This covers most of the constellation and includes regions where these molecular clouds are collapsing to form young starts.

The y-axis of this diagram is in degrees of declination with north as up and the x-axis is in hours of right ascension with east to the left. The sizes of the stars marked here relate to the star's apparent magnitude, a measure of its apparent brightness. The larger dots represent brighter stars. The Greek letters mark the brightest stars in the constellation. These are ranked by brightness with the brightest star being labeled alpha, the second brightest beta, etc., although this ordering is not always followed exactly. The circle around Betelgeuse indicates that it is a variable star. The dotted boundary lines mark the IAU's boundaries of the constellations and the solid green lines mark one of the common forms used to represent the figures of the constellations. Neither the constellation boundaries, nor the line marking the ecliptic, nor the lines joining the stars appear on the sky.

Credit: Adapted by the IAU Office of Astronomy for Education from the original by IAU/Sky & Telescope

License: CC-BY-4.0 Creative Commons Attribution 4.0 International (CC BY 4.0) icons

Libra Constellation Map

Caption: The constellation Libra along with its bright stars and surrounding constellations. Libra is surrounded by (going clockwise from the top) Serpens Caput, Virgo, Hydra, Centaurus, Lupus, Scorpius and Ophiuchus. Libra lies on the ecliptic (shown here as a blue line), this is the path the Sun appears to take across the sky over the course of a year. The Sun is in Libra from late October to late November. The other planets of the Solar System can often be found in Libra.

Libra lies just south of the celestial equator and is thus visible at some time in all but the most arctic regions. Libra is most visible in the evenings in the northern hemisphere late spring/early summer and southern hemisphere late autumn/early winter.

The y-axis of this diagram is in degrees of declination with north as up and the x-axis is in hours of right ascension with east to the left. The sizes of the stars marked here relate to the star's apparent magnitude, a measure of its apparent brightness. The larger dots represent brighter stars. The Greek letters mark the brightest stars in the constellation. These are ranked by brightness with the brightest star being labeled alpha, the second brightest beta, etc., although this ordering is not always followed exactly. The dotted boundary lines mark the IAU's boundaries of the constellations and the solid green lines mark one of the common forms used to represent the figures of the constellations. Neither the constellation boundaries, nor the line marking the ecliptic, nor the lines joining the stars appear on the sky.

Credit: Adapted by the IAU Office of Astronomy for Education from the original by IAU/Sky & Telescope

License: CC-BY-4.0 Creative Commons Attribution 4.0 International (CC BY 4.0) icons

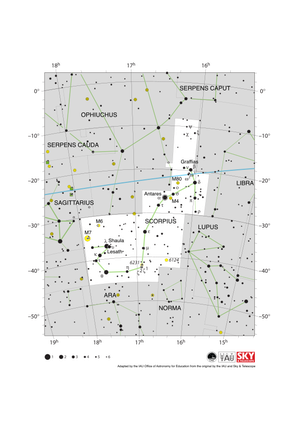

Scorpius Constellation Map

Caption: The constellation Scorpius (often commonly called Scorpio) along with its bright stars and surrounding constellations. Scorpius is surrounded by (going clockwise from the top) Ophiuchus, Serpens Caput, Libra, Lupus, Norma, Ara, Corona Australis and Sagittarius. Scorpius’s brightest star Antares appears in the heart of the constellation with the famous tail of Scoprius in the south-east (lower left). Scorpius lies on the ecliptic (shown here as a blue line), this is the path the Sun appears to take across the sky over the course of a year. The Sun only spends a short amount of time in late November in Scorpius. The other planets of the Solar System can often be found in Scorpius.

Scorpius lies south of the celestial equator. The whole constellation is not visible from the most arctic regions of the world with parts of Scorpius obscured for observers in northern parts of Asia, Europe and North America. Scorpius is most visible in the evenings in the northern hemisphere summer and southern hemisphere winter.

The yellow circles mark the positions of the open clusters M6, M7 & NGC 6231 while the yellow circles with plus signs superimposed on them mark the globular clusters M4 and M80.

The y-axis of this diagram is in degrees of declination with north as up and the x-axis is in hours of right ascension with east to the left. The sizes of the stars marked here relate to the star's apparent magnitude, a measure of its apparent brightness. The larger dots represent brighter stars. The Greek letters mark the brightest stars in the constellation. These are ranked by brightness with the brightest star being labeled alpha, the second brightest beta, etc., although this ordering is not always followed exactly. The circle around Antares indicates that it is a variable star. The dotted boundary lines mark the IAU's boundaries of the constellations and the solid green lines mark one of the common forms used to represent the figures of the constellations. The blue line marks the ecliptic, the path the Sun appears to travel across the sky over the course of one year. Neither the constellation boundaries, nor the line marking the ecliptic, nor the lines joining the stars appear on the sky.

Credit: Adapted by the IAU Office of Astronomy for Education from the original by IAU/Sky & Telescope

License: CC-BY-4.0 Creative Commons Attribution 4.0 International (CC BY 4.0) icons